Many of us have wondered this question, but few have data to support an actual argument: what happens legally when AI catches a finding and the radiologist misses it? Apparently, jurors have an opinion on this matter.

This Nature Health brief communication was recently published. If you don’t have access to Nature Communications, the authors have shared a preprint as well.

Participants acting as mock jurors passed judgment on a malpractice scenario involving a missed brain hemorrhage on CT on a stroke case.

The TL;DR on the case: AI flagged the bleed correctly. The radiologist and the final report did not. The patient was catastrophically harmed.

Two variants of the malpractice scenario were compared.

- AI-Human, in which the human reviews an AI output and renders a final report

- Human-AI-Human, in which the expert first reviews the case alone, then reviews AI, and then creates the final output

In both cases, the final diagnosis was incorrect; in both cases, the patient was harmed.

Both scenarios had human in the loop.

The only difference is that the second was set up as an “AI sandwich”: The human expert both began the evaluation without AI, and then had an opportunity to revise the evaluation after AI.

Does it make a difference? What do you think?

Jurors were 22% more likely (absolute) to find the radiologist liable in the AI-Human scenario compared to the Human-AI-Human scenario. In other words, when the radiologist first interpreted the scan independently, and only then reviewed it again with AI input, liability dropped significantly.

To be fair, in both scenarios, the radiologist was still found to be liable more than half of the time.

The takeaway: Liability isn’t just about missing a diagnosis. It’s about how you demonstrate clinical judgment.

For a claim to be successful, it must be demonstrated that:

- A doctor-patient relationship existed

- The doctor provided substandard care

- The negligence caused injury

- The injury resulted in significant harm

In this case, a radiologist who forms an independent opinion and then cross-checks with AI appears much closer to have provided standard care, since the other three points were essentially indisputable.

There are limits here. This is a hypothetical scenario, not real courtroom data. And it doesn’t answer harder questions, like what happens when both AI and radiologist are wrong.

AI integration is not only a technical decision but also a distinctly clinical one.

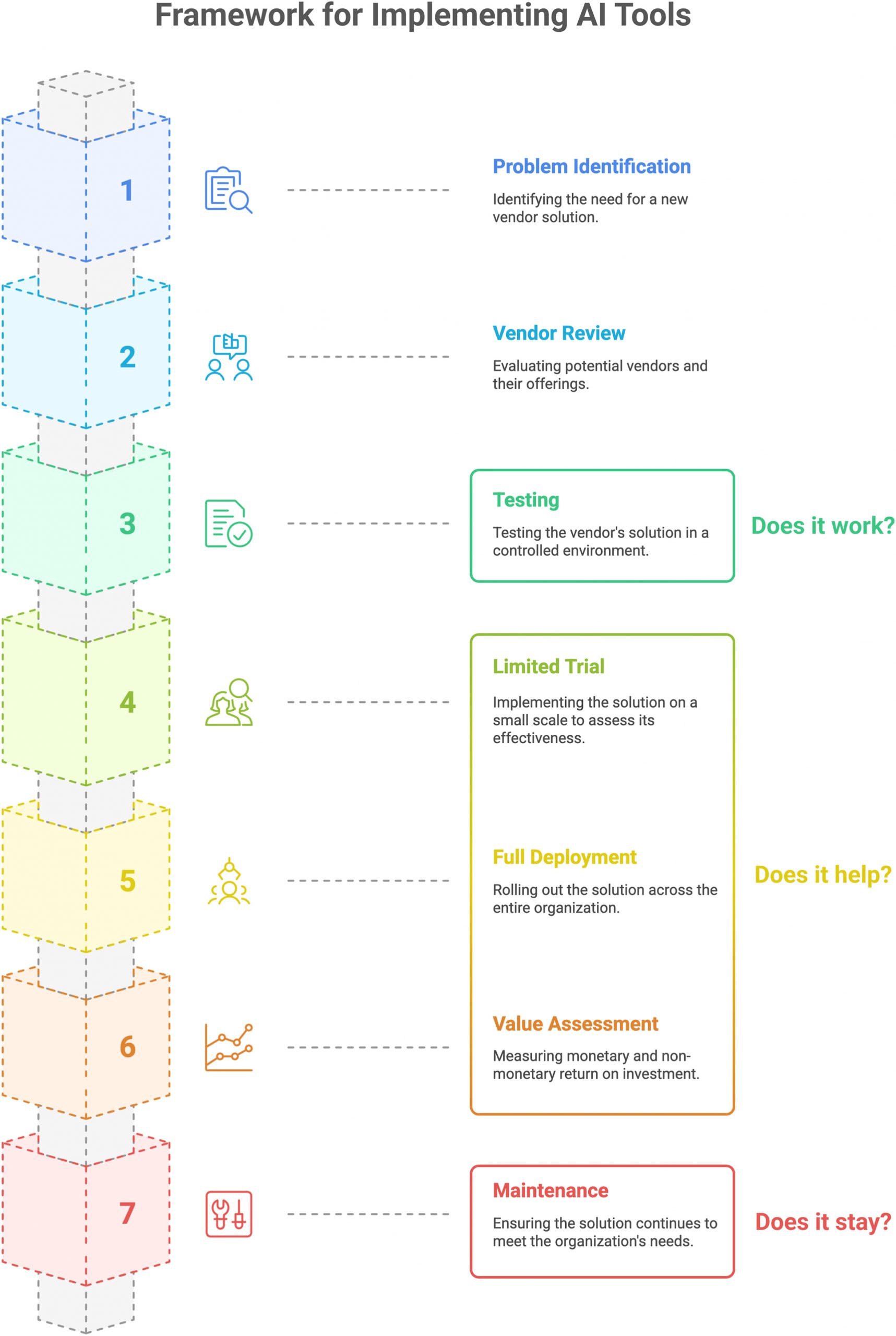

For radiology departments, that means workflow design deserves as much attention as model accuracy. There must be several distinct stages to the AI implementation.

My team published this JACR article (it’s open access!) that also came out this month, drawing on a similar insight: just proving that the AI algorithm works locally is not enough. Proper clinical implementation means the AI system must be deployed in real-world practice, and its workflow must be deliberately assessed in the clinical context. In fact, we placed substantially more emphasis on the clinical integration and endorse a multi-step ramp-up design that allows efficiency, effectiveness, and safety to be evaluated.

In the end, AI does not redefine the standard of care; we do, through how we choose to use it.