Most conversations about physician burnout focus on workload. This study points somewhere more fundamental. And in it, I learned a new phrase.

According to a new JAMA paper, nearly 40% of physicians report “moral distress,” which is the experience of knowing what the right thing is for a patient, but being unable to act on it because of constraints in the system.

Burnout is depletion. On the other hand, moral distress is about misalignment.

The consequences are predictable. As moral distress rises, so do burnout, intent to reduce clinical hours, and intent to leave practice.

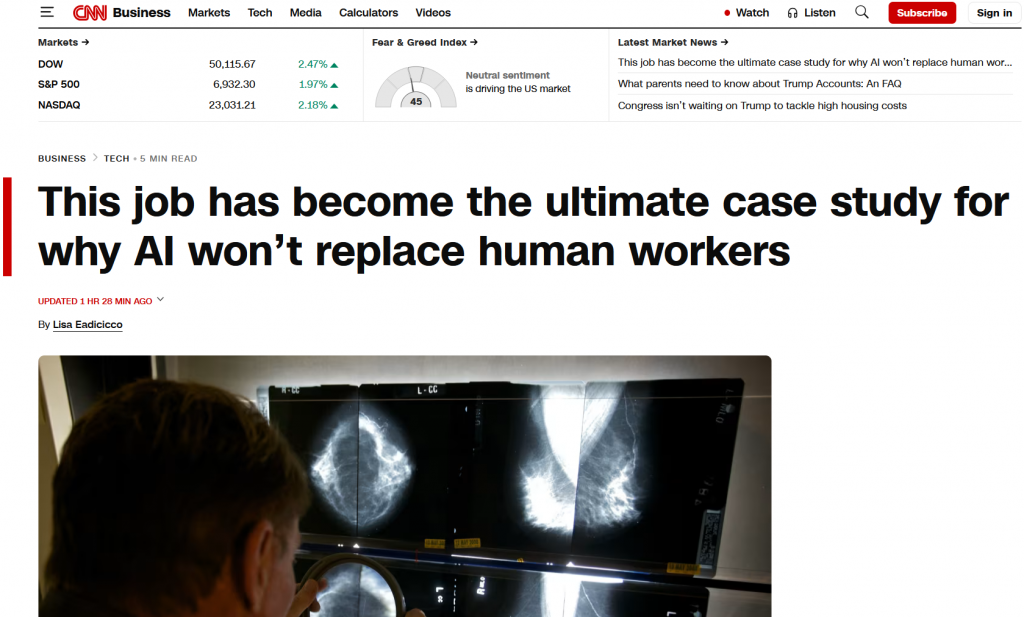

Radiology is not exempt, although it is also not an outlier. In this dataset, radiologists had similar odds of moral distress compared to other physician groups. Diagnostic radiology is often viewed as buffered, since we do less bedside care and more asynchronous work.

Yet the same structural forces apply: throughput pressure, fragmented workflows, and responsibility without full control over downstream decisions. It was to fight these problems that I first began dedicating a career in informatics. Classic informatics is about using information and data to address all of these.

Moral distress is more of a meta-problem. It is a systems design problem (and ironically, at times, technology has become a contributor).

When the best way to deliver work conflicts with operational reality, the system absorbs that gap through clinician distress. Over time, that becomes burnout. Then attrition.